|

1/16/2024 0 Comments Sample size power analysis

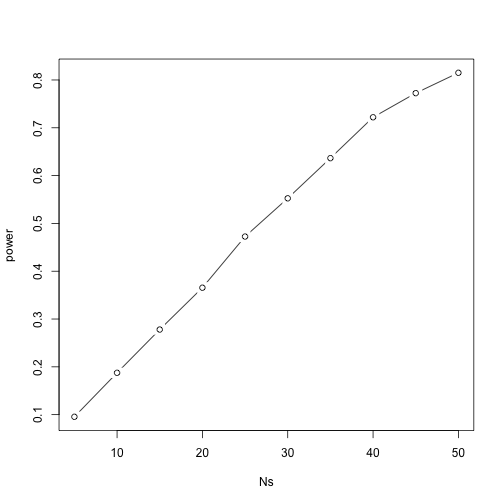

Tt <- t.test(num1, num2, alternative = "two.sided")Įffectsize::effectsize(tt) # Cohen's d | 95% CIīased on the t-test results in the distribution plot, we assume that the data represents two samples from the same population (which is false) because the p-value is higher than. # generate two vectors with numbers using the means and sd from above Let’s check the effect size now (we will only do this for this distribution but you can easily check for yourself that the effect sizes provided in the plot headers below are correct by adapting the code chunk below and using the numbers provided in the plot headers). This is so because Cohen’s d represents distances between group means measured in standard deviations (in our example, the standard deviation is 10 and the difference between the means is 2, i.e., 20 percent of a standard deviation which is equal to a Cohen’s d value of 0.2). If the above effect size is correct (Cohen’s d = 0.2), then the reported effect size should be 0.2 (or -0.2). Let us briefly check if the effect size, Cohen’s d of 0.2, is correct (for this, we increase the sample size dramatically to get very accurate estimates). Let us now draw another two samples (N = 30) but from different populations where the effect of group is weak (the population difference is small). The means of the samples are very similar and a t-test confirms that we can assume that the samples are drawn from the same population (as the p-value is greater than. Let us start with the distribution of two samples (N = 30) sampled from the same population. To explore this issue, let us have a look at some distributions of samples with varying features sampled from either one or two distributions. The fact that the effect size depends on the number of stimuli also has implications for meta-analyses. Hence, you must include the same number of observations per condition if you want to replicate the results. Standardized effect sizes in analyses over participants (e.g., Cohen’s d) depend on the number of stimuli that were presented. The 1600 observations we propose is when you start a new line of research and don’t know what to expect. The ballpark figure we propose for RT experiments with repeated measures is 1600 observations per condition (e.g., 40 participants and 40 stimuli per condition). The more sobering finding is that the required number of observations is higher than the numbers currently used (which is why we run underpowered studies). In experimental psychology we can do replicable research with 20 participants or less if we have multiple observations per participant per condition, because we can turn rather small differences between conditions into effect sizes of d >. Here are some main findings coming from these papers as stated in Brysbaert and Stevens ( 2018) and on the website: The aim is not to provide a fully-fledged analysis but rather to show and exemplify a handy method for estimating the power of experimental and observational designs and how to implement this in R.Ī list of very recommendable papers discussing research on effect sizes and power analyses on linguistic data can be found here. This tutorial is aimed at intermediate and advanced users of R with the aim of showcasing how to perform power analyses for basic inferential tests using the pwr package (Champely 2020) and for mixed-effect models (generated with the lme4 package) using the simr package (Green and MacLeod 2016 a) in R. Power analysis is a method primarily used to determine the appropriate sample size for empirical studies. This tutorial introduces power analysis using R.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed